We started to talk about standards between teams and system owners at Agoda a couple of years ago. We first started out on C#, the Idea was to come up with a list of recommendations for developers, for a few reasons.

One was we follow polyglot programming here and we would sometimes get developers more familiar with Java, JavaScript and other languages that would be working on the C# projects and would often misused or get lost in some of the features (e.g. When JavaScript developers find dynamics in C#, or Java Developer get confused with Extension Methods).

Beyond this we want to encourage a level of good practice, drawing on the knowledge of the people we have we could advise on potential pit falls and drawbacks of certain paths. In short the standards should not be prescriptive ones, as in “you must do it this way”, they should be more “Don’t do this”, but also teach at the same time, as in “Don’t do this, here’s why, and here’s some example code”. But also includes some guidance as well, as in “We recommend this way, or this, or this, or even this, depending on your context”, but we avoid “do this”.

The output was the standards repository that we’ve now open sourced

It’s primarily markdown documents that allow us to easily document, and also use pull requests and issues to start conversation around changes and evolve.

But we had a “If you build it they will come” problem. We had standards, but people either couldn’t find them, didn’t read them, and even if they did, they’ll probably forget half of them within a week.

So the question was how do you go about implementing standards amongst 150 core developers and hundreds more casual contributors in the organisation?

We turned to static code analysis first, the Roslyn API in C# is one of the most mature Language Apis on the market imo. And we were able to write rules for most of the standards (especially the “Don’t do this” ones).

This gave birth to a cross department effort that resulted in a Code fix library here that we like to call Agoda Analyzers.

Initially we were able to add them into the project via the published nuget package and have them present in the IDE, and they are available here.

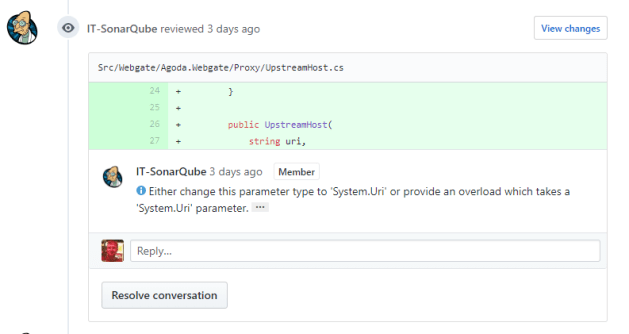

but like most linting systems they tend to just “error” at the developer without much information, which we don’t find very helpful, so we quickly moved to Sonarqube with it’s github integration.

This allows a good experience for code reviewers and contributors. The contributor get’s inline comments on their pull request from our bot.

This allows the user time to fix issues before they get to the code reviewers, so most common mistakes are fixed prior to code review.

Also the link (the 3 dots at the end of the comment) Deep links into sonarqube’s WebUI to documentation that we write on each rule.

This allows for not just “Don’t do this”, but also to achieve “Don’t do this, here’s why, and here’s some example code”.

Static code analysis is not a silver bullet though, things like design patterns are hard to encompass, but we find that most of the crazy stuff you can catch with it, leaving the code review to be more about design and less about naming conventions and “wtf” moments from code reviewers when reviewing the time a node developer finds dynamics in C# for the first time and decides to have some fun.

We are also trying the same approach with other languages internally, such as TypeScript and Scala are our two other main languages we work in, so stay tuned for more on this.