Users, when presented with issues in software (Error messages, unexpected behavior, etc), tend to try to explain things with what limited knowledge they have, and the lions share of users don’t understand the inner workings of software. Commonly this is know as Abductive reasoning, the processes of forming a conclusion based on the simplest possible explanation without much investigation.

Generally I find users draw conclusions in this manner from their past negative experience with your or other applications.

The first example I want to mention is relating users picking up on similar repeated error messages.

I used to expose the Message property of the Exception object in the error message to the users, in a hope to help in trouble shooting. This ended up causing a serious issues with the users reasoning.

As you may know one of the most common programming mistake in .NET is a “Object Reference not set to an instance of an object”, or null reference error. I once had a user say to me:

It’s been 6 months and we are still getting the same Object Reference Error coming back again, and again, when are you going to fix this Object reference error?

It was of course not the same error, when applying new development work I had made this common mistake and introduced a null reference error a few times. The user though saw the same error, even though it was in different section of the application, and assumed the same problem, most annoyed because it was the same issue he had already paid me 3 times to fix.

Similar unexpected behavior is another one.

I once had a web forms application where I didn’t take much care on the assignment of the default button in the panels, so the enter key had quite unpredictable behavior on some pages when used from textboxes, but it wasn’t until we had a user that didn’t like her mouse that we really started picking up on it.

At first we fixed a couple of pages, then she found more and more, after 3-4 reports of the issues (each on different pages) we realized it was wide spread, bit the bullet, and audited the whole application. Instead of fixing on a per report basis.

But this was enough, the user was poisoned, every time we spoke to her about issue she had she was sure it was “another problem with pressing the enter key”, 6 months later, she was still telling us “I think its another issues with pressing the enter key”.

This behavior of the user is unavoidable, you can’t change human behavior, but you can mitigate the behavior.

Firstly your Error Messages.

If you know what the error is, i.e. its a “handled error”, like your database server is offline/timing out. Then customize all your error messages in good English

A good error message should do two things

1. Tell the user whats wrong

2. Suggest what the user can do next

For example

If the exception you are handling is “SQLException: Timeout Exception”

The database is currently not responding, it maybe temporarily offline or there may be a larger issue. Please try again shortly and contact support if the problem persists.

Next if you have a global error handler like me, or are handling generic exceptions that you might not know what the error is, then be ambiguous and keep your user in the dark, don’t assume they can help you (They are not your friend 🙂 ).

A good error message in this situation is “Unknown Error”, tell the user you don’t know what it is, because you don’t or you would be handling it.

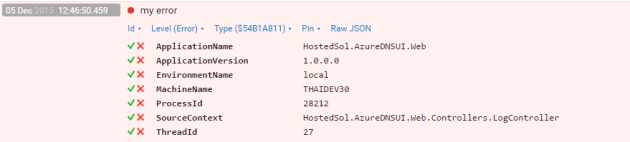

Unknown Error: Please contact support and quote them this number (44FHGI2)

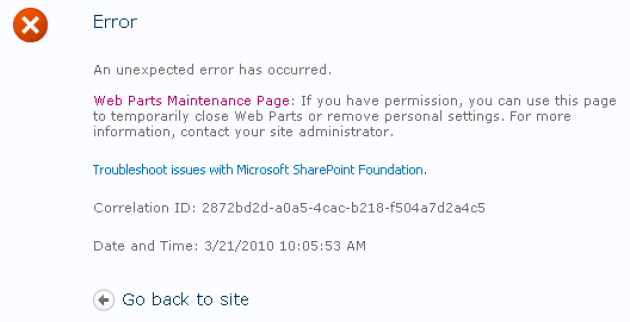

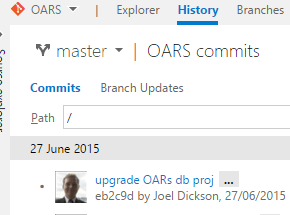

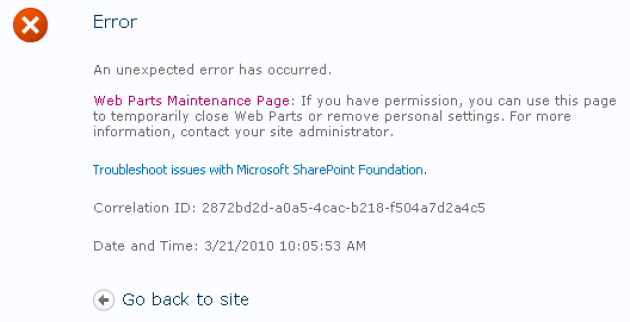

How do you know what it is? Use a correlation ID, SharePoint is an example of this, but not a good one. In SharePoint when you get an error they give you an ID that you can pass on to the support team to lookup the logs, why share point is a bad example is that they use a GUID, have you every asked a user to read you a GUID over the phone?

If you ever have a reference number this long that people maybe reading or typing out make sure its got a check digit in it, and a damn good reason for it being so long.

If you don’t have some sort of sequence you can grab a number from, in your logging system or storage, then generating a fairly unique alpha-numeric code isn’t overly hard, lots of libraries out there. You should also feed your correlation Id into all logs form a users session if you can, not just the error.

Secondly Good Habits.

If someone picks up a mistake you’ve made (like my enter key example above) take some time to think, “How much of an issues is this going to be with the rest of my app?”, don’t let it get out of control, if you find some “bad practice” or even just “lazzyness”, take your time to check it out, Ctrl+Shitf+F is your friend in this circumstance. you can usually get a good indication from a few smart searches how wide spread an issue may or may not be.

Lastly, take some pride in your work.

A job finished isn’t always a job well done. I recommend giving yourself as much “click around time” as you can after your done (also know as exploratory testing if you need a fancy word to justify it to your boss), just clicking around and using it as much as you can, on all sorts of areas, don’t forget tabbing, enter keys, and the usual alternate methods of navigating around.